Mnemosyne is a Claude Code skill that turns your AI-assisted work into persistent memory by automatically storing architectural decisions, TRDs, risks, and findings in a local Obsidian vault. When you start a new session, Claude already knows what you learned before, eliminating context loss between sessions.

- Migration and technical work produces institutional knowledge that vanishes after AI sessions end; Mnemosyne captures this automatically by storing findings in Obsidian without extra effort

- The system consists of three pieces: an Obsidian vault with typed document folders, the Obsidian Local REST API plugin, and a Claude Code skill that reads the API key and manages vault operations

- Document types include projects, daily journals, meetings, ADRs, TRDs, risks, benchmarks, and writeoffs, each with structured templates and naming conventions for queryability

- CLAUDE.md routing rules make Obsidian-first behavior automatic: knowledge questions trigger the setup-obsidian skill, and a shell hook ensures pure code edits bypass documentation to avoid friction

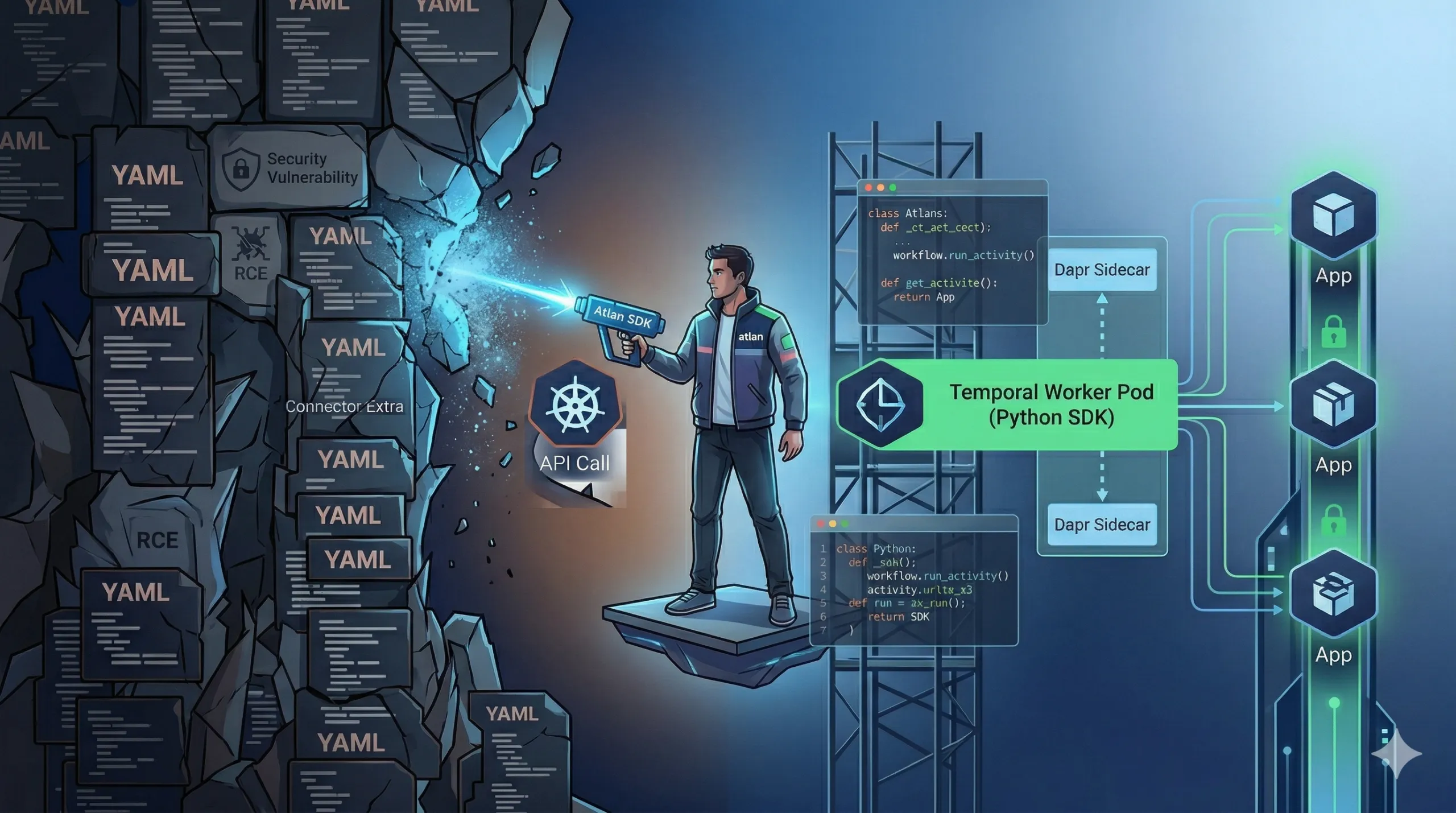

- Horizon 1 is personal local-first knowledge; Horizon 2 envisions connector-specific knowledge hubs; Horizon 3 imagines agentic infrastructure that triages tickets, synthesizes standups, and accelerates onboarding from accumulated vault content

Migration work has a familiar rhythm. You spend sessions with an AI assistant reverse-engineering APIs, mapping edge cases, documenting risks — building a real understanding of the system you’re moving. The AI is genuinely useful. Then the session ends, and all of that context disappears with it.

Next session, you begin from scratch, re-explaining the connector, restating the constraints, rediscovering gotchas you already uncovered days before, and that’s when you think, how to give AI context from previous sessions.

That’s the wall we kept hitting during our connector migration work. And the AI wasn’t the only thing that forgot. Our institutional knowledge was just as fragmented. Design decisions lived in Google Docs nobody revisited. Meeting outcomes were buried in Slack threads. Risk analyses existed but were not connected to the decisions they informed. Teams repeated discussions, forgot rationale, and spent standups re-syncing on context that should have been queryable instead.

We needed our AI assistant to remember our work — not just chat history, but the actual thinking: TRDs, ADRs, risk analyses, writeoffs, debugging journals, and migration notes. That problem became Mnemosyne: an AI second brain built on top of Obsidian and Claude Code.

Why this was hard

We were not trying to build another note-taking app. We already had plenty of places to write things down. The hard part was closing the gap between where knowledge lived and where we did the work with AI.

Several constraints showed up quickly:

- Knowledge is fragmented by default. Decisions, experiments, and failures were spread across Slack, Linear, Google Docs, PR descriptions, and personal notes. None of these were wired into our AI assistant. Every session ignored this history and treated us like a new user with no past context.

- Chat memory is not institutional memory. When the AI helped us understand a legacy system or a tricky migration, that understanding stayed in the chat. If we did not copy it somewhere permanent, it was lost when the session ended — non-transferable, not queryable by other engineers, and unreliable even for our own future reference.

- Asking humans to “remember to document” does not work. We knew that telling engineers to write better docs would fail. People already used Claude Code daily for design, testing, analysis, and reviews. If documentation required extra manual steps, it would not happen consistently.

- We needed structure without centralizing everything too early. We wanted a system that worked as a personal second brain from day one, but could eventually evolve into a connector-specific knowledge hub and then into agentic infrastructure. That meant enough structure to be queryable later, without forcing heavy upfront taxonomy work every time someone wanted to save a thought.

- Existing tools solved only parts of the problem. Search tools could find scattered docs, but they did not turn your ongoing AI-assisted work into a persistent knowledge base. Vector databases and RAG stacks were overkill for a personal workflow and would introduce operational overhead we did not want for individual engineers.

The core tension: we needed a system that felt like no extra work during a normal Claude Code session, yet quietly turned that work into durable, structured memory.

The constraint that shaped everything else was behavioral, not technical. Any system that asked engineers to step outside their normal Claude Code workflow would fail. The path of least resistance had to go through the knowledge base.

What we built: Mnemosyne as a Claude Code skill

We deliberately kept Mnemosyne small and local. It is not a new service, database, or app. It is a Claude Code skill called setup-obsidian that teaches the assistant how to read from and write to a local Obsidian vault through the Obsidian Local REST API plugin.

The design principle: make Obsidian the default destination for anything worth remembering, without requiring a separate command or mental context switch.

The architecture in one picture

At a high level, Mnemosyne connects three pieces:

- An Obsidian vault on the user’s machine, storing knowledge as plain markdown files, for example

~/Documents/claude-obsidian/. - The Obsidian Local REST API plugin, which exposes the vault over HTTP on

localhost:27123and requires an API key stored in the plugin config. - The

setup-obsidianClaude Code skill at~/.claude/skills/setup-obsidian/SKILL.md, which reads the API key, talks to the REST API, enforces document templates, and decides how and where to write knowledge.

There’s no /mnemosyne-note command. The system is invoked as /setup-obsidian or triggered automatically via routing rules in the user’s CLAUDE.md config and a shell hook.

Components

Obsidian Vault — a directory of plain markdown files that acts as the knowledge store. All project notes, ADRs, TRDs, risks, benchmarks, writeoffs, meeting notes, and logs live here.

Obsidian Local REST API Plugin — a community plugin that runs on localhost:27123, exposes endpoints to list, read, create, and update files in the vault, and stores an API key in <vault>/.obsidian/plugins/obsidian-local-rest-api/data.json, which the skill reads directly.

setup-obsidian Skill — a skill definition at ~/.claude/skills/setup-obsidian/SKILL.md that reads the API key from the plugin config, calls the REST API to create and update vault files, enforces naming conventions and folder structure, and handles two modes: initial setup and ongoing document creation.

CLAUDE.md Routing Rules — a global config file in ~/.claude/CLAUDE.md that encodes an “Obsidian-first rule”: knowledge questions, analysis requests, and investigations first invoke the setup-obsidian skill so results are stored; requests to write, note, save, or document something trigger the skill immediately; purely mechanical commands like git commit or npm build bypass Obsidian; code fixes that produce new findings execute, then save the findings into Obsidian.

UserPromptSubmit Hook — a shell script at ~/.claude/hooks/obsidian-first-check.sh that runs on every user prompt, pattern-matches for knowledge and documentation intents, and nudges the session toward Obsidian-backed writes. Pure code editing commands are exempted.

This combination means that the path of least resistance in Claude Code automatically goes through Mnemosyne.

Document types and vault structure

To keep the knowledge base queryable, we defined explicit document types, each with a structured template and folder conventions inside the vault.

Supported types include:

| Type | Folder | Naming | Purpose |

|---|---|---|---|

project | Projects/<Name>/ | subdirectory per project | overview with linked ADRs, TRDs, risks |

daily | Daily Journal/ | YYYY-MM-DD.md | morning check-in, work log, end-of-day review |

weekly | Weekly Plans/ | YYYY-WXX.md | weekly goals, plan, and retrospective |

meeting | Meeting Notes/ | YYYY-MM-DD - <Title>.md | agenda, discussion, action items, decisions |

adr | Architecture/ | ADR-NNN - <Title>.md (auto-incrementing) | architecture decision records |

trd | TRDs/ | TRD - <Title>.md | technical design documents |

risk | Risk Analysis/ | Risk - <Title>.md | risk register with likelihood and impact scoring |

benchmark | Benchmarking/ | Benchmark - <Title>.md | performance comparisons with methodology |

writeoff | Writeoffs/ | Writeoff - <Title>.md | abandoned approaches and lessons learned |

note | Notes/ | free-form | general notes |

Documents can also be scoped under projects, for example Projects/Mnemosyne/Architecture/ADR-001 - Initial Obsidian Integration.md.

A deployed vault might look like:

Weekly planning

├── Meeting Notes/ # Meeting records

├── Architecture/ # ADRs

├── TRDs/ # Technical designs

├── Risk Analysis/ # Risk assessments

├── Benchmarking/ # Comparisons

├── Writeoffs/ # Abandoned approaches

├── Projects/ # Project-scoped docs

│ ├── Mnemosyne — Vision and Roadmap.md

│ ├── Obsidian as a Second Brain with Claude Code.md

│ └── Why an AI Second Brain.md

├── Notes/ # General notes

├── RCA/ # Root cause analyses

└── Connector-Source-Info/ # Connector-specific knowledgeThis gives Claude a predictable map of where to read and write different kinds of knowledge.

Runtime behavior

Mnemosyne focuses on two core flows: document creation and session start behavior.

Document creation

When the skill needs to create or update a document:

- It resolves the vault path from Claude Code memory, via a

reference_obsidian_vault.mdfile. - It reads the API key from the Obsidian plugin config in the vault.

- It verifies that the REST API is running with a simple GET to

http://127.0.0.1:27123/. - It lists existing items in the target folder to detect duplicates or opportunities to append.

- It asks whether to append to an existing document or create a new one.

- It issues a PUT request to

http://127.0.0.1:27123/vault/<path>with templated content for the selected document type. - It suggests cross-links to related documents to connect decisions, risks, and designs.

The key decision was to always offer to append rather than blindly creating new files. That keeps the knowledge base from exploding into hundreds of near-duplicate notes — a risk register or ongoing TRD stays in one place, growing richer with each session rather than fragmenting across many files.

Session start

At session start, Claude Code reads the vault and the CLAUDE.md file so it can:

- Load context about active projects, open risks, and past decisions.

- Understand the vault structure, naming conventions, and document types.

- Use the accumulated history to answer questions like “what did we learn from the last time we touched this connector?” or “what was the reasoning behind choosing this extraction pattern?”.

In practice, the CLAUDE.md file for a real migration project grew from around 5 lines to around 80 lines as we iterated, teaching the assistant more about the project over time.

What Mnemosyne is not

We explicitly decided to not build several things at this stage:

- It is not a

/mnemosyne-notecommand or a separate app. - It is not a dedicated

mnemosyne/directory structure. It uses typed folders likeArchitecture/andTRDs/instead. - It is not integrated with our Automation Engine review flows or CI/CD.

- It is not a vector database or RAG system. The skill relies on plain text search via the Obsidian API and the assistant’s file reading.

- It is not a shared team tool yet. It is intentionally personal, designed as Horizon 1 of a broader roadmap.

These constraints kept the implementation shippable and focused on solving the immediate problem: making one engineer’s life better by turning their AI-assisted work into durable memory.

What changed in real work

The system only mattered if it actually changed how work happened. We validated Mnemosyne during real connector migration work, not a toy demo.

How to give AI context

Using Obsidian as a second brain meant our AI assistant no longer started from a cold state each session. Architecture decisions, risk analyses, and technical designs accumulated in the vault. Instead of keeping this context in chat, we captured it in structured documents that the assistant could read later.

Over time, the CLAUDE.md config for a migration project expanded significantly. It began as a minimal setup and evolved into a detailed description of the project, constraints, and key files to prioritize. This iterative growth taught the assistant about our specific project, which made its future suggestions and analyses more grounded.

This changed how we worked in a few ways:

- When we asked “look at my project notes for the connector migration and tell me what is still unresolved”, Claude already knew the folder structure and naming conventions. We did not need to paste content or rely on memory.

- When we used the AI to understand a complex legacy system, we captured the understanding in TRDs, ADRs, and risk analyses inside Obsidian. The knowledge survived beyond the chat session and could be queried later by us or others.

- During debugging, we could keep a running journal that logged symptoms, dead ends, and fixes, turning one-off investigations into a queryable incident history.

Our process for validating assumptions also benefited. In one example, we ran a large number of test cases to validate API behavior like timestamp bumping and consistency between endpoints before writing production code. Having those findings stored in the vault meant they were accessible for future related work rather than forgotten in an old chat transcript.

Habit without extra effort

The most important outcome was not a specific metric, but a shift in behavior: documenting stopped feeling like a separate task.

Since everyone already used Claude Code daily, making setup-obsidian the default path for storing outputs meant the knowledge base populated as a side effect of work people were doing anyway. No one needed to “remember” to document. Design reviews, risk analyses, and migration notes naturally flowed into Obsidian through the skill.

This validated a key strategic bet: if we could make the personal second brain genuinely useful for one engineer in real work, then a connector-specific knowledge hub based on the accumulated vault content would become viable.

We do not have formal benchmarks for time saved or incident reduction yet. What we saw instead were qualitative gains:

- Less time spent re-explaining project context to the AI.

- Fewer repeated investigations into the same connector issues.

- Easier handoffs, since we could synthesize a project’s history from the vault instead of scheduling long brain-dump calls.

Where this goes next

Mnemosyne today is Horizon 1: a personal second brain that runs locally via Obsidian and a Claude Code skill. The roadmap sketches two further horizons, each depending on the habits and knowledge being built now.

Horizon 2: connector-specific knowledge hubs

The same pattern that works for personal knowledge can apply to domain-specific institutional knowledge, starting with connectors. A vault or vault section per connector, structured around known issues and resolutions, API quirks and undocumented behavior, past incidents and root causes, performance characteristics, customer-reported edge cases, and migration history.

Agents would ingest existing sources automatically — pulling resolved tickets and extracting learnings, watching Slack channels for decisions and outcomes, reading PR descriptions and review comments to capture rationale.

In that world, an engineer picking up a connector they have not touched in months would start with “what broke with this API before?” or “what did we learn from the last migration?” and get answers powered by an accumulated vault, not just search across unstructured artifacts.

Open questions remain: how to bridge personal local-first vaults with team-wide knowledge without losing local control; how to handle ingestion quality so automated Slack or ticket ingestion captures signal rather than noise; how to detect and flag stale knowledge so agents do not rely on outdated decisions.

Horizon 3: agentic knowledge infrastructure

Once structured, queryable knowledge exists, agents can go beyond Q&A into actual workflow orchestration:

- Ticket agents that auto-triage new issues by checking the knowledge base for similar past incidents, suggest priorities and assignees, and capture resolution details back into the vault when tickets close.

- Standup replacements that read daily logs, tickets, PR activity, and Slack threads, synthesize per-person and per-project summaries, and flag misalignments like projects with no activity near deadlines.

- Decision audit trails that maintain a living map of key decisions and their downstream effects, check whether flagged risks have corresponding mitigations, and detect when code has diverged from original TRDs.

- Onboarding acceleration that generates personalized onboarding paths based on role, surfacing the most relevant decisions, risks, and TRDs for interactive Q&A over the accumulated knowledge.

None of this is built into Mnemosyne yet. The connector hub and agentic infrastructure only make sense if the knowledge base turns out to be rich enough after real usage — and the only way to know that is to use it.

For now, Mnemosyne is a small, local Claude Code skill hooked into Obsidian. It turns the session that would have been forgotten into a document. It turns the document into something queryable. The morning you open a new session and Claude already knows what you learned yesterday — that is the foundation we are betting on for everything that comes next.

Frequently Asked Questions

How do I set up Mnemosyne with Obsidian and Claude Code?

Install the Obsidian Local REST API plugin in your Obsidian vault, which exposes the vault over HTTP at localhost:27123. Create a Claude Code skill at ~/.claude/skills/setup-obsidian/SKILL.md that reads the API key from the plugin config and calls the REST API. Configure ~/.claude/CLAUDE.md with routing rules to invoke the skill for knowledge and documentation intents. The skill automatically handles vault operations without requiring manual commands.

What document types does Mnemosyne support?

Supported types include: Projects (project overviews), Daily Journal (work logs), Weekly Plans (goals and retrospectives), Meeting Notes (agendas and decisions), ADRs (architecture decision records), TRDs (technical designs), Risk Analysis (with scoring), Benchmarks (performance comparisons), Writeoffs (abandoned approaches), and general Notes. Each has a structured template and folder convention for easy querying.

How does Mnemosyne prevent the knowledge base from fragmenting into duplicate notes?

When creating a document, the skill always offers to append to an existing document rather than blindly creating a new one. It lists existing items in the target folder to detect duplicates. This keeps a risk register or ongoing TRD in one place, growing richer with each session instead of fragmenting across many files.

What happens at the start of a new session?

At session start, Claude Code reads the vault and CLAUDE.md file, loading context about active projects, open risks, and past decisions. The assistant can then answer questions like 'what did we learn from the last time we touched this connector?' or 'what was the reasoning behind choosing this extraction pattern?' using the accumulated vault history.

What are the three horizons of Mnemosyne's roadmap?

Horizon 1 (current): Personal second brain using Obsidian and Claude Code skill. Horizon 2 (connector knowledge hubs): Connector-specific vaults capturing API quirks, past incidents, and migration history, with agents ingesting Slack and PR descriptions automatically. Horizon 3 (agentic infrastructure): Agents that auto-triage tickets, synthesize standups, audit decision trails, and accelerate onboarding from accumulated knowledge.