This is Part 2 of our Apps Framework series. In Product to Platform-transformation, we covered why Atlan’s existing architecture couldn’t support platform-level extensibility — the observability gaps, the deployment bottleneck, and the decision to rebuild rather than patch. This post gets into how we solved the workflow orchestration layer itself — and what led to our Argo to Temporal migration.

Product to Platform Transformation established why our architecture couldn’t support platform-level extensibility. The decision to rebuild was clear. The question was where to start — and how to do it without breaking hundreds of existing workflows, customers, and internal tools.

Workflow orchestration was the first layer we had to tackle. Argo had been running everything at Atlan for years: metadata crawlers, bots, onboarding flows, tenant operations, infra maintenance. It was the right tool for where we started. Two things made staying on it no longer viable.

What Pushed Migration

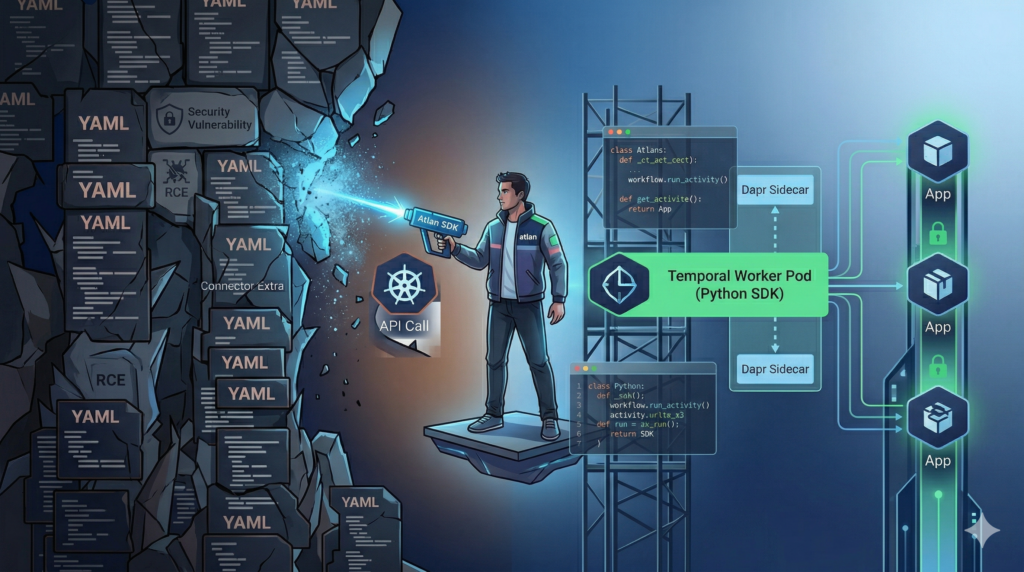

Two things made continuing on the existing setup no longer viable — a security assessment that forced a decision point, and the need to support customers running in environments where a full Kubernetes stack wasn’t an option.

Security and blast radius

Security reviews (including an external assessment) surfaced two critical gaps in how we had set up our workflow infrastructure:

- Over‑privileged Kubernetes service accounts could access “100+ sensitive secrets cluster‑wide,” enabling lateral movement.

- Our workflow configuration left a path open for remote code execution that could reach cloud metadata services and enumerate “400+ EC2 instances,” with a path to full infra compromise.

At the same time, we’d already chosen Temporal as the long‑term orchestration platform. Every hour we put into hardening our existing workflow infrastructure was an hour not spent on getting Temporal production‑ready.

We had to choose between a deep remediation of the existing setup and accelerating the Argo to Temporal migration.

Scale, rollout, and hybrid deployment

We had to support three realities simultaneously:

- Existing Atlan Cloud tenants running Argo‑only connectors

- New “app framework” connectors built directly on Temporal + Dapr

- Customer environments where we might only be allowed to run containers, not a full Kubernetes + Argo stack (for example, Secure Agent 2.0 on Docker/Podman)

We could not “turn off Argo” and flip a switch to Temporal. We needed a path that:

- Let us ship new connectors and publish flows on Temporal now. This was critical since we were building the

app-frameworkand the Temporal stack in parallel - Kept older Argo flows working

- Reduced Argo’s attack surface and operational load over time

What We Considered

When we started the migration, we looked at three broad options for how apps and workflows should run:

- Fully native Temporal (ideal end state)

- All orchestration (extraction, transform, publish, lineage, popularity) runs as Temporal workflows.

- UI reads from app‑level metadata directly.

- No Argo in the critical path.

- Argo orchestrates native apps (interim stage)

- Argo remains the outer workflow engine.

- Individual steps (like extraction) call out to independent Temporal apps.

- Publish and other capabilities move to apps over time.

- Argo “crossover” apps (current state when we started)

- Apps run inside Argo pods.

- Auth/UI/config are handled by the “old” system.

- Temporal is used under the hood but hidden inside the pod lifecycle.

Option 1 was where we wanted to end up, but it required:

- Fully native publish, lineage, and popularity apps

- A redesigned workflow monitoring UX

- A new marketplace and deployment experience for apps

We couldn’t block all migration work on that.

Option 2 was a pragmatic middle ground — Argo stays as the outer scheduler while individual steps call out to standalone Temporal apps. This gave us incremental migration without a full rewrite, but it still depended on Argo for workflow lifecycle, scheduling, and the user-visible run state.

Option 3 (crossover 1.0) let us embed Temporal workers inside Argo pods, but Argo still owned pod lifecycles, config, and logging. The orchestration benefits of Temporal — code‑first workflows, long‑lived state, activity retries — were hidden inside one Argo step.

We ended up choosing a combination of 2 and 3:

- In parallel, design the fully native architecture (UI → Heracles → Apps → Temporal, no Argo) and gradually move flows there.

- Use Crossover 2.0 as the pragmatic bridge: Argo triggers Temporal‑based apps, which own the real business logic.

What We Built

The migration happened in layers. We couldn’t flip a switch — we needed a new foundation first, then a bridge between the old and new worlds, and then a path to move flows to native Temporal progressively. Each layer had to be production-safe and independently shippable.

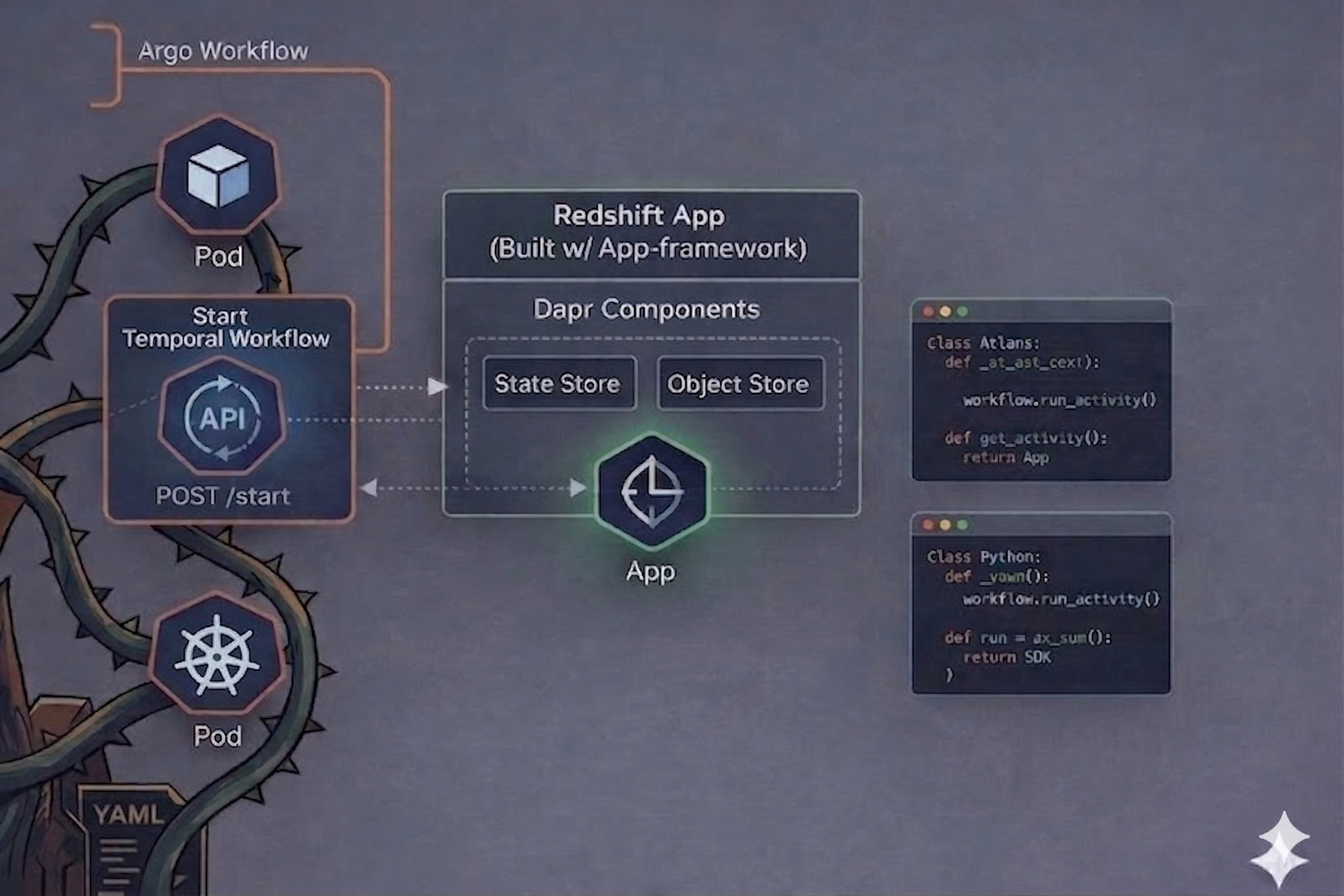

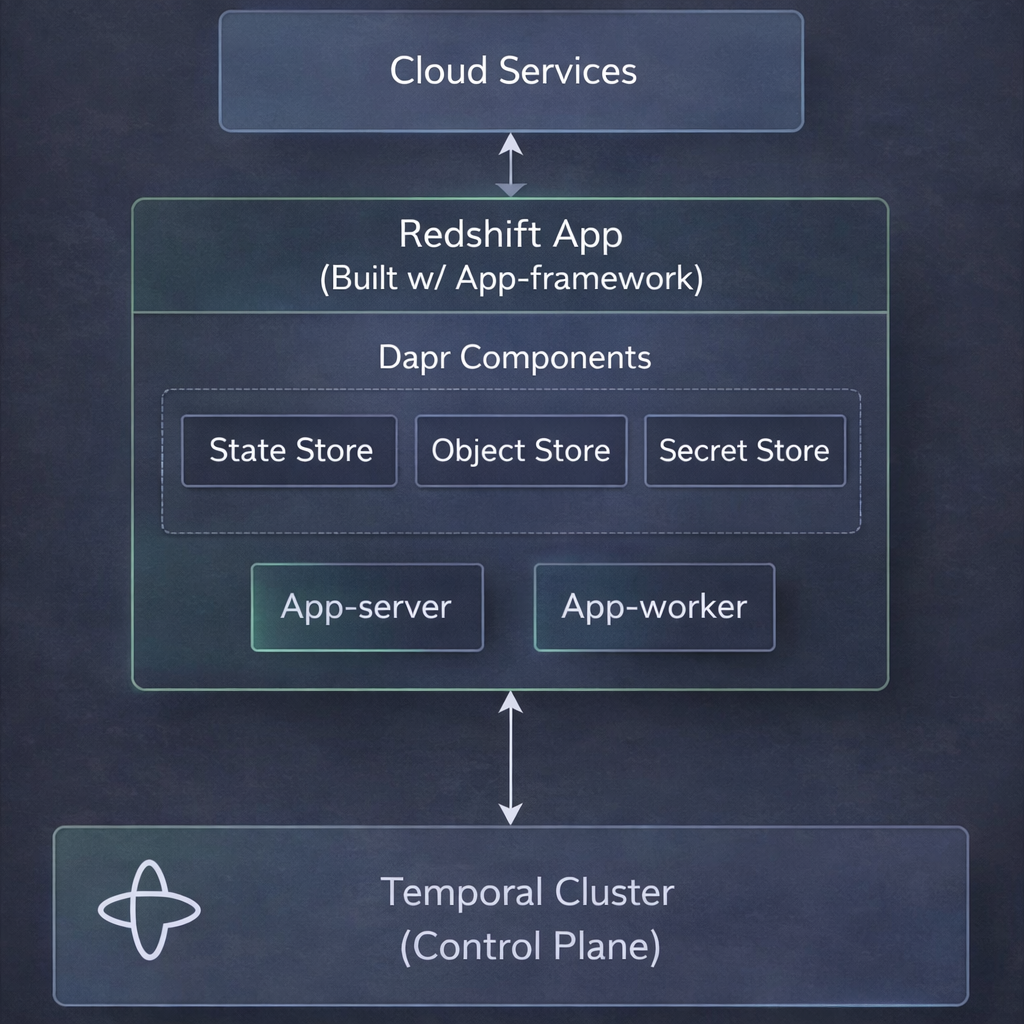

The App Framework: Temporal + Dapr as the foundation

The Atlan App Framework gives us a common way to build connector and utility apps:

- Temporal workflows and activities orchestrate extraction, processing, transformation, and state persistence.

- Dapr provides abstraction for object store, state store (PostgreSQL), secret store (Vault), and other components (such as event stores, and optional components that might be needed by different apps).

- Apps expose a FastAPI server to serve UI configs, API endpoints (such as to test authentication, run preflight checks) and workflow control endpoints (

/uiconfigs,/start,/status, etc.).

Connectors like Postgres, Redshift, Snowflake, and others are now implemented as standalone Temporal apps that:

- Run as independent Kubernetes deployments or as containers in Secure Agent 2.0.

- Use a shared application SDK for common patterns (object store writing, credential handling, retry, logging).

This gives us a consistent execution model across Atlan Cloud and customer infra.

Crossover 2.0: Argo as outer shell, Temporal as engine

Crossover 1.0 allowed engineering teams to build apps and replace Argo templates with newly built apps. Next step was to bridge existing Argo workflows and new apps. That’s when we built Crossover 2.0:

- Argo still owns the high‑level workflow object and is what the UI triggers.

- Instead of Argo running the Temporal worker inside Argo pods, Temporal workers become Kubernetes deployments, and the Argo pod just calls the API endpoints in the Temporal worker.

This “one Argo step → many Temporal activities” mapping let us:

- Swap in Temporal apps behind existing Argo templates incrementally (connector by connector).

- Keep publish and downstream steps working while we migrated those parts to apps too (for example, the new publish app with Temporal orchestration).

Redshift and Postgres were some of the first connectors to go through this crossover path.

Native Temporal architecture: UI → Apps → Temporal

While Crossover 2.0 was rolling out, we defined the native architecture that removes Argo from connector orchestration entirely:

Key pieces:

- Application servers (apps) expose REST endpoints for:

- Workflow configuration (

/config), scheduling, and control (/start,/stop,/pause,/status) - Serving UI schemas (

/uiconfigs) that replace Kubernetes ConfigMaps

- Workflow configuration (

- Heracles (API layer) routes workflow submissions to app endpoints instead of Argo’s

/workflows/submitAPI, via a newPOST /workflows/native/submitpath. - Temporal is the only workflow engine: apps act as Temporal clients, submitting and running workflows; no Argo CRDs are involved in the run path.

The same pattern applies beyond connectors. For example:

- The new publish system is a single publish app pod with Temporal workflows partitioning, diffing, and publishing assets, instead of a multi‑pod Argo publish DAG.

- Platform workflows (like Horizon tenant operations, ES sharding, private link) use Temporal workers in dedicated namespaces and task queues instead of Argo templates.

Migration tooling: structuring the Argo to Temporal migration

For connectors that had years of history and dozens of Argo templates, we couldn’t rely on ad‑hoc rewrites. We built a Connector Migration Tooling System that defines a six‑phase pipeline:

- Argo Flow Graph Generation

- Parse WorkflowTemplate YAML into a machine‑readable DAG.

- Enrich nodes with behavior (“does”), inputs/outputs, and S3 artifact maps.

- Temporal Graph Generation

- Map each Argo node to a Temporal activity.

- Decide where to consolidate multiple Argo steps into one activity.

- Per‑connector TRD (Technical Requirements Document)

- Specify workflows, activities, clients, handlers, processors, and transformers to implement.

- Implementation Phase

- Build the SDK‑based app following the TRD.

- Validation Phase

- Run end‑to‑end with Temporal + Dapr locally.

- Assert that

raw/,processed/, andtransformed/output directories are populated in the expected structure.

- Parity Check Phase

- Compare legacy Argo output vs new Temporal app output via a dedicated validation tool.

- Compute per‑type

id_match_rate, attribute differences, and classify any drift (transformer, extractor, key format, regression).

This tooling gave us a repeatable way to move connectors off Argo while measuring fidelity to the old behavior.

Unified observability for Argo + Temporal

Moving orchestration to Temporal created an observability gap, especially for Crossover 2.0, where half the work runs in Argo pods and half in Temporal workers.

We addressed this with crossover live log streaming:

- A central service (Heracles) streams logs from:

- The Argo Server API for Argo pods

- Kubernetes logs for Temporal worker pods

- A

trace_idand Argo metadata (atlan-argo-workflow-id,atlan-argo-workflow-node) propagate from Argo into Temporal via the app SDK. - The log streamer filters K8s logs line‑by‑line by

trace_id={workflow-name}and merges Argo + Temporal output into a single server‑sent events stream back to the UI.

Guardrails (15‑minute timeout, 20,000 line cap, Redis‑based concurrency limiter) keep live streams bounded.

In parallel, we started building Temporal‑first metrics and alerting, where Temporal emits events into S3 / lakehouse, and new workflow signals are derived from that instead of Kubernetes events.

Results & Lessons Learned

We’re still mid‑migration — the work is spread across connectors, publish, platform workflows, and Secure Agent, and we don’t yet have a single global metric like “X% of workflows now run on Temporal only.” But the direction is measurable in concrete ways, and the mistakes we made taught us things that hard numbers wouldn’t capture.

Here are the concrete changes we can point to:

- Security posture

- We removed a major class of risk (workflow‑driven RCE + over‑privileged Argo service accounts) from new features by defaulting them to Temporal‑based apps and limiting Argo’s role to interim crossover flows.

- Temporal threat modeling is part of the design, so we don’t carry the same gaps forward.

- Developer experience

- New connectors and apps are built as Python code using the application SDK, not as YAML DAGs. This allows developers to add breakpoints, test their changes locally and iterate faster.

- Migrating a connector now has a documented, phase‑gated process with parity checks, instead of a one‑off rewrite.

- Runtime flexibility

- The same Temporal‑based connector can run in Atlan Cloud or customer environments (for example, Secure Agent 2.0’s per‑app containers) without embedding Argo or K3s on the customer side.

- Operational control

- Platform workflows like ES sharding and PrivateLink provisioning are owned by Horizon + Temporal rather than bespoke Argo templates spread across clusters.

Three things stood out from the mistakes:

- You can’t skip the interim architectureWe initially hoped to jump from “Argo everywhere” to “Temporal native” quickly. In reality, Crossover 2.0 and “Argo orchestrates apps” have been essential:

- They let us ship Temporal apps while UI, marketplace, and monitoring still expected Argo.

- They provided a safety net: toggling

enable_crossoverorenable_publish_app_executorallowed per‑tenant rollouts and fallbacks.

- Observability has to evolve with orchestrationOur first cross‑stack flows had great Temporal logs and opaque user experiences. The live‑logs project (Argo + Temporal worker logs joined by

trace_id) was critical to make the crossover architecture debuggable for support and field teams. - Migration needs structure, not heroicsWithout the migration tooling and TRD pipeline, “move connector X to the new framework” degenerated into one‑off efforts that were hard to validate or reproduce. Defining explicit phases, artifacts, and acceptance criteria forced us to treat migration as an engineering product, not a collection of tasks.

What’s Next

We’re not done. Three threads are actively in progress as we work toward fully native Temporal and a clean decommission of the existing setup:

- Automation Engine / Native Automation We’re moving customer‑facing workflow entities (like Redshift extraction + publish) onto a fully native automation engine where the Atlan UI talks to Temporal‑backed apps directly, with no Argo in the path.

- End‑to‑end Temporal observability We’re building Temporal‑driven metrics and alerting (Temporal → S3 → lakehouse) and a new workflow signals platform so teams can get the same or better visibility they had with Argo + Numaflow, but without Kubernetes events in the loop.

- Decommissioning Argo safely We still rely on Argo for some internal workflows and as the outer shell for crossover connectors. The long‑term plan is:

- Migrate remaining connectors and publish flows via the migration framework.

- Move remaining onboarding and infra workflows to Temporal where it makes sense.

- Gradually retire Argo’s permissions and surface area until it’s no longer part of the production run path.

We’re still learning, but the direction is clear: code‑first workflows on Temporal, apps as the execution unit, and our Argo to Temporal migration gradually reaching completion.

In the next post, we’ll go deeper into the App Framework itself — how we designed the execution boundary, the SDK, and the multi-tenant isolation model that makes it safe for partners and customers to build on Atlan.